When I was in medical school, I noticed an issue when I was attempting to perform an ultrasound-guided cyst aspiration for the first time. Immediately, I was annoyed that my right hand was occupied by a needle, my left hand by the wireless ultrasound, and my vision constantly switching between the cyst and the ultrasound display screen. Showing my naivete, I went too deep, poked a blood vessel and caused a hematoma. It’s not the biggest problem in the world, but it still diminished the patient experience. We tell our patients that we could cause a hematoma during the consent, but certainly a sense of frustration was there.

So, our team set out to try and create a system for POCUS-procedures that did two things. One, eliminate the need for the constant head-turning and attention shifting that was, itself, a distraction to the procedure. And two, create a way to record procedures so that they could be datamined for machine learning, with the intent that we could develop an “optimal” training method.

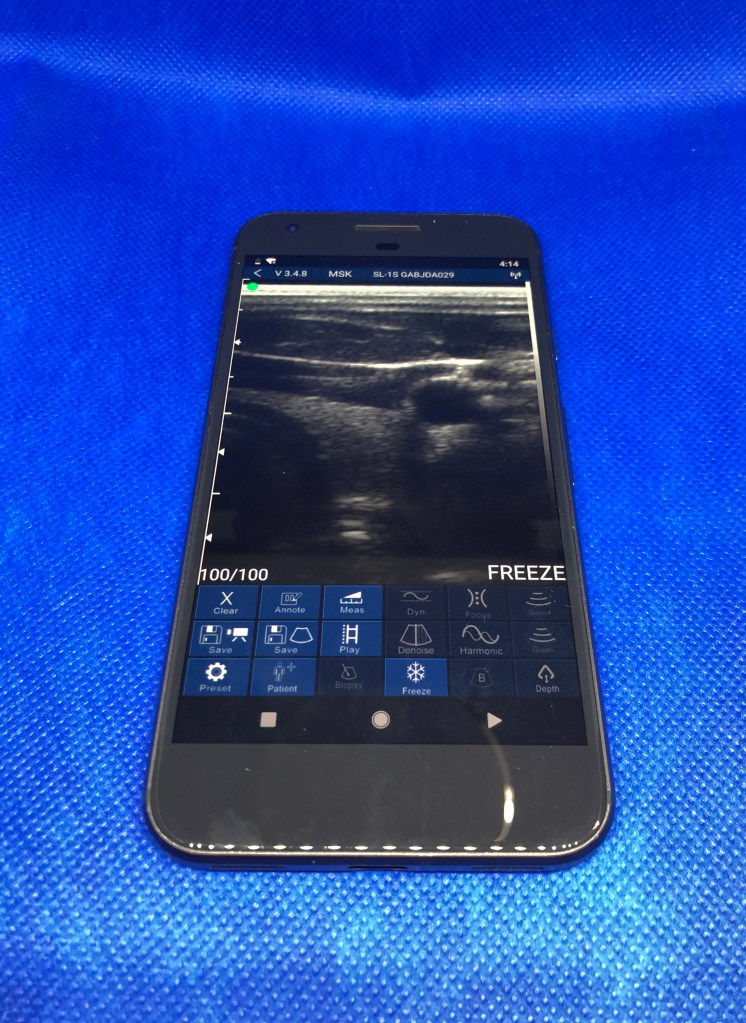

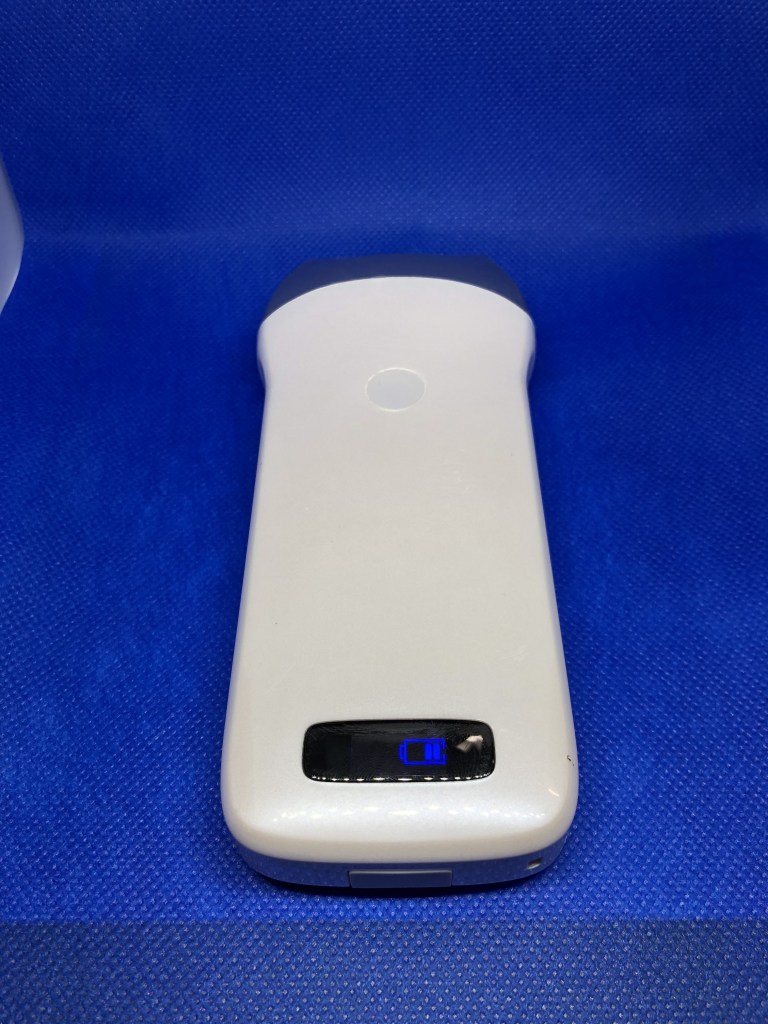

Over the next couple of months, we spent about $600 an off-the-shelf wireless portable ultrasound in the top left, a pair of cheap augmented reality lenses produced by Epson (that’s right, the printer company), and a used Google Pixel 1. If pictures are worth a thousand words, then we hope a video could be worth a million.

This next video is shot from the perspective a user who is wearing augmented reality lenses. By tracking the “Spaceship 02” fiducial marker, we could place real-time ultrasound images localized to their anatomically correct position. You’ll have to excuse my excitement in the video, as this was our first successful demo.

Unfortunately, because of the pandemic, this has remained in patent limbo (patent pending).